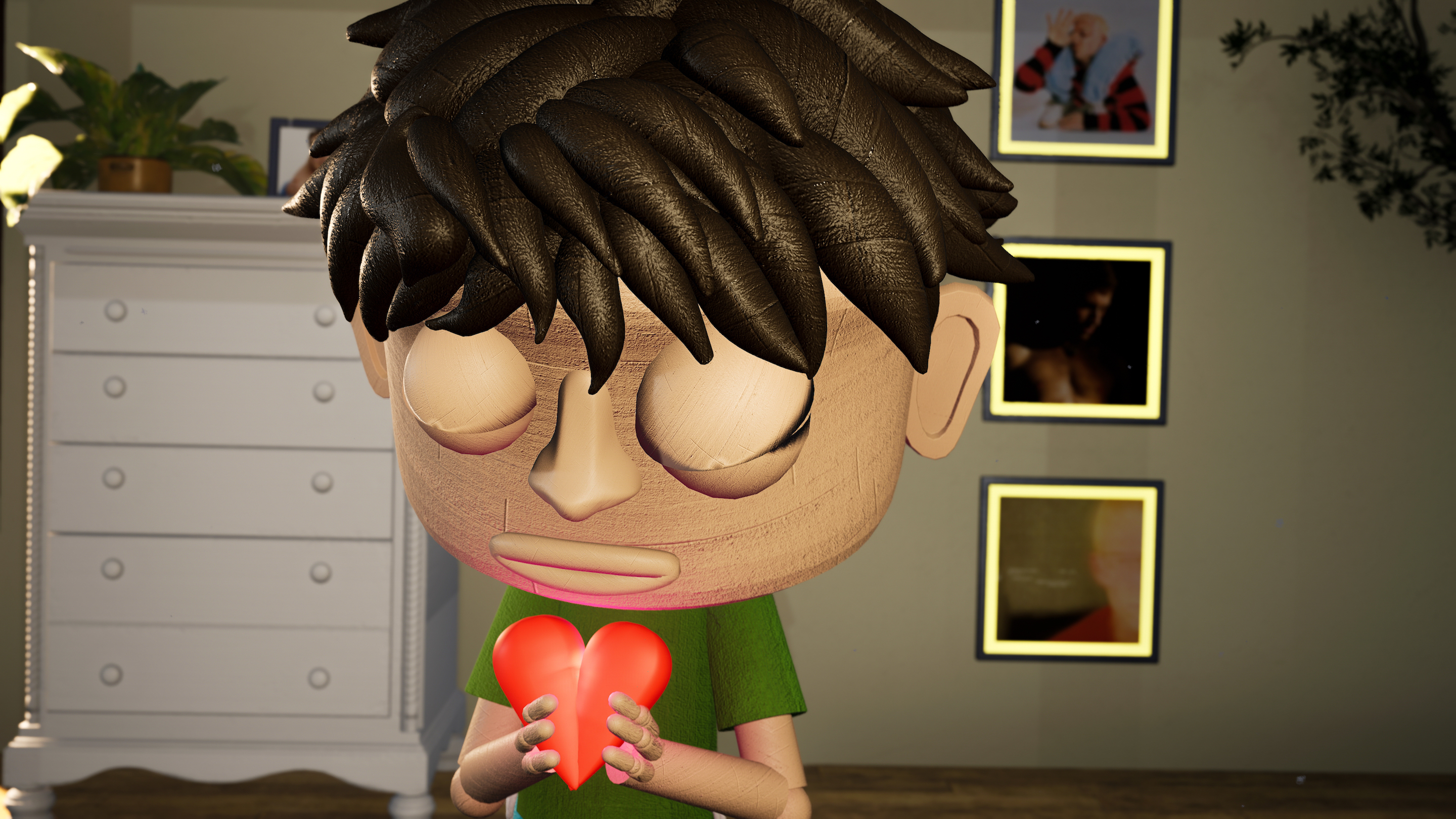

How one person with Faceware and Unreal created an entire music video for the LA-based singer/songwriter, Sally Boy.

Recently, a virtual production arm at Area of Effect was tasked to see what one person could do when armed with the right technology. From that came the idea of a music video, which RCA and Loud Robot greenlit. The result was a music video for a song from LA-based musician Sally Boy called "I Love U."

We recently had the opportunity to sit down with Bryce Cohen, the single creator who put Faceware and Unreal through their paces to create the video. The full interview is below:

How did this project come about? What was the impetus for the project?

"I Love U" came about from multiple angles. Devin Ehrig, the director of virtual production @AOE challenged me with showcasing what one person could do with a game engine. Coincidentally that same month, Jake Zaotis (the project’s director) approached me asking if we could possibly do a music video in the game engine. After a few weeks of figuring out what we could do in a reasonable amount of time, we approached LoudRobot/RCA with a concept for Sally Boy’s song.

What were your concerns coming into the project from a production standpoint?

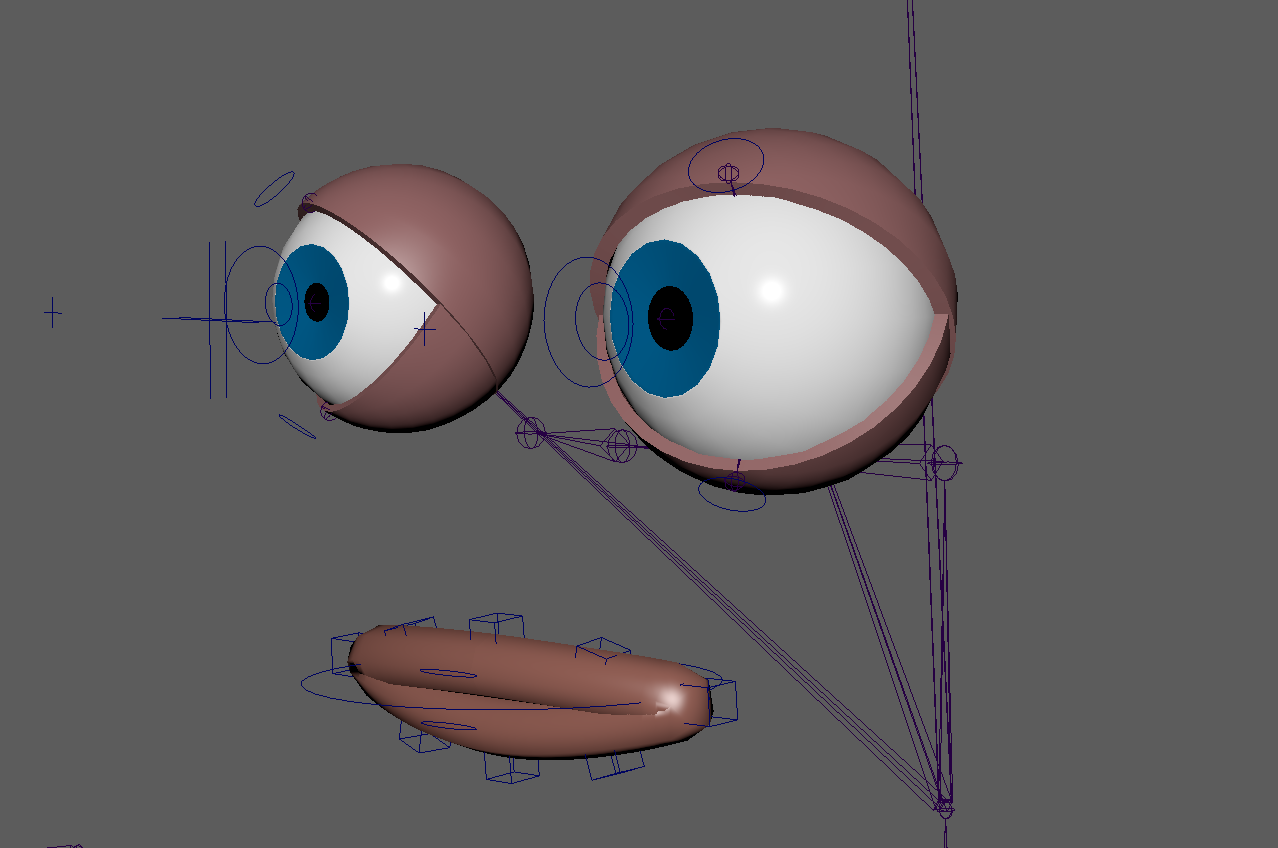

Everything was a concern for this project. From the get-go, I knew my way around the engine, I knew how to do real-camera tracking, and I knew how to use Faceware on a Metahuman – that was it. So the concerns included rigging a custom character for mocap and facecap, and specifically, figuring out how to create a custom rig for the Faceware blendshapes that uses multiple meshes. Everything was possible in theory, but these were problems that we would have to solve during production.

How did you choose your production technology?

We used what I had available thanks to AOE’s work in previz. This includes Faceware Studio, and HTC Vive camera tracking. Beyond that, I knew we would need an inertial suit to bring as much physical motion into the digital world as possible.

What were you trying to achieve with your facial animation?

The story did not call for any speaking, but we did want the character to feel real and somewhat human. We simply did not have the bandwidth or skill to animate the face properly, so facial tracking for those little details was our only option.

How did Faceware help you achieve that?

Faceware allowed us to reliably create facial animation data for our character quickly with extremely high quality.

What tips/tricks would you suggest for other single artists/small teams about to embark on their projects?

Don’t cut scope out just because you haven’t done certain things before. All of these tools are becoming extremely user-friendly and there is a ton of support online for them. Take on the challenge.

Anything else you’d like to add?

Shoutout to the Faceware Support Discord and Feeding Wolves for her videos!

Request a

Free Trial

Click the button below to download one or all of our software products for a free trial.

Request a TrialPricing

Explore our different licensing and product options to find the best solution for your facial motion capture needs. If you need a more tailored solution, talk to us about our Enterprise Program.

Pricing Options